AI is not software. Here's what it actually is, and why that changes everything.

TL;DR for busy founders: Traditional software follows rules you write. AI learns patterns from experience, like a person, not a program. This means it needs context, data, and clear direction to do useful work. It does not arrive ready to go. Understanding this one distinction will save you from the most common and expensive mistake founders make when they start building with AI.

Most founders come into their first AI conversation with a version of the same expectation: we plug it in, point it at our business, and it works.

It's a reasonable assumption. That's more or less how software has worked for the last 40 years. You buy a CRM, you set it up, you use it. The system does exactly what it was programmed to do, every time, without deviation.

AI is fundamentally different. Not a little different. Structurally different. And if you start building with the wrong mental model, you'll spend real money solving the wrong problems.

This post is about giving you the right mental model before you spend a penny.

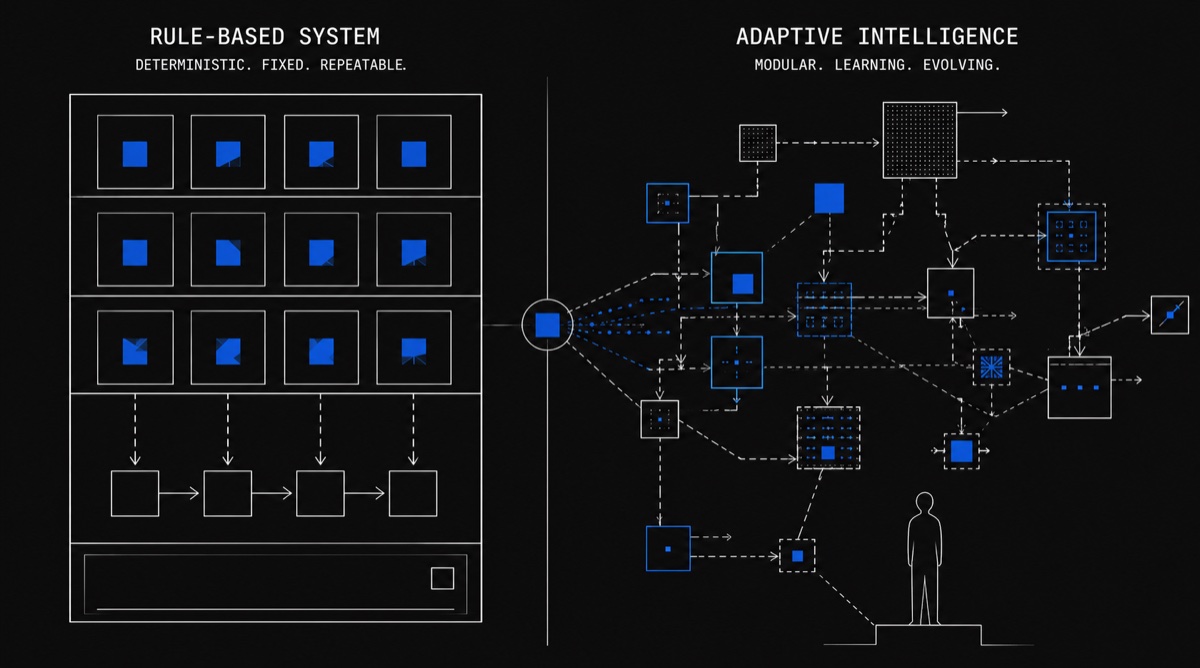

The old world: software that follows rules

Traditional software is a set of instructions written by a human. Every possible scenario is accounted for in advance. If a customer's order is over £100, apply the discount. If the form field is empty, show an error. If the user clicks this button, open that screen.

It's deterministic. Rule A always produces Result A. The system doesn't have opinions. It doesn't adapt. It doesn't improve from experience. It does exactly and only what it was told to do.

This is why software is reliable, auditable, and predictable, and also why building it is slow. Every edge case has to be anticipated. Every new scenario has to be explicitly coded. The programmer's job is to imagine every possible future state and write a rule for it.

That model has worked brilliantly for decades. But it has a hard ceiling.

The new world: software that learns from patterns

AI, and specifically the kind of AI being deployed in business today, large language models, works on a completely different principle.

Instead of following rules written by a programmer, it learns patterns from vast amounts of examples. It has processed billions of documents, conversations, and pieces of text. From that, it has developed a deep, nuanced understanding of how language works, how problems are structured, how answers tend to look.

It doesn't have a rulebook. It has experience.

The analogy that tends to land:

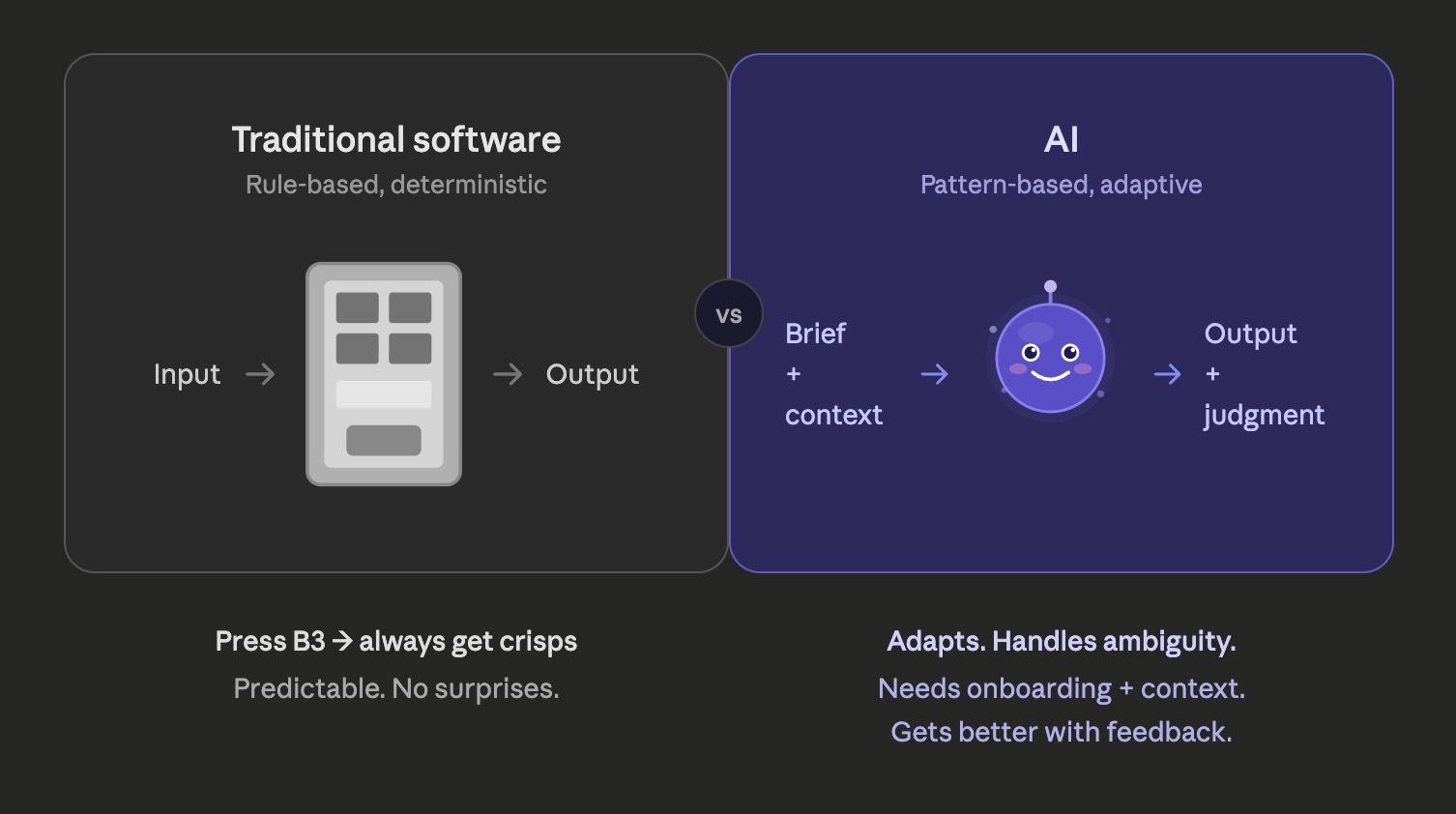

Traditional software is a vending machine. You press B3, you get crisps. Every time. It cannot give you anything that isn't already loaded into it, and it will never do anything you didn't explicitly programme it to do.

AI is closer to a new employee, one who arrived with years of general experience and is genuinely capable, but who knows nothing specific about your business yet. They need onboarding. They need context. They need clear direction. But once they understand what you're trying to do, they can handle ambiguity, adapt to new situations, and get meaningfully better over time.

That shift, from vending machine to new employee, changes almost everything about how you plan, scope, and manage an AI project.

Why the plug it in assumption is so costly

When founders assume AI works like traditional software, they skip the step that matters most: giving the AI what it needs to actually be useful.

Here's what that looks like in practice. A founder decides they want an AI assistant to help their team handle client enquiries. They sign up for an API, have someone wire it into their inbox, and wait for it to perform.

What they get back is a polite, articulate, completely generic response, because the AI knows nothing about their business, their clients, their tone, their processes, or their products. It's like hiring someone and sending them straight to the front desk without telling them what the company does.

The AI didn't fail. The setup failed.

This is the pattern we see repeatedly, across professional services firms, retail businesses, real estate agencies, anywhere founders are curious about AI but are coming to it with a software mindset.

The technology is genuinely capable. But capability without context produces noise, not value.

What AI actually needs to do useful work

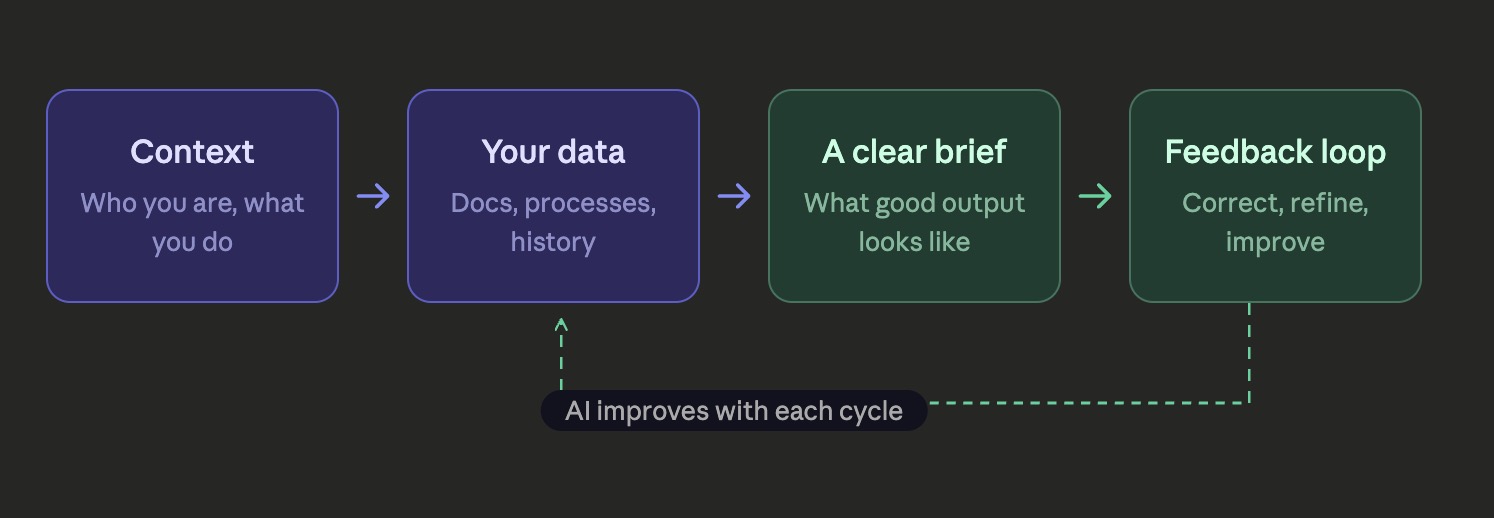

Think back to that new employee analogy. What would you give a smart, capable hire on their first day to set them up for success?

- Context about the business, what you do, who your customers are, what matters.

- Access to the right information, internal documents, processes, past work.

- A clear brief, what does good look like? What are the boundaries?

- Feedback loops, a way to course-correct when the output isn't right.

AI needs exactly the same things.

The technical term for giving AI access to your business information is RAG (Retrieval-Augmented Generation), covered in depth in Episode 3. But the principle is simple: AI doesn't arrive knowing your Q3 sales figures, your client onboarding process, or the specific way your firm likes to write proposals. That data has to be connected to it deliberately.

The brief, what you want the AI to do, in what tone, within what boundaries, is what engineers call a system prompt. Again, the mechanics matter less than the principle: you have to tell it what good looks like. It won't infer it.

And the feedback loop is the part most founders skip entirely in the early stages, then wonder why the output drifts. AI systems need to be monitored, corrected, and refined. That's not a bug in the technology. That's just how you manage capable people, too.

A practical way to think about this

Before you evaluate any AI use case for your business, run it through this simple frame:

Answer each question to check your readiness.

If you can answer those four questions clearly, you're ready to build. If you can't, you need more thinking before you need more technology.

One more thing worth saying directly

AI is not magic. It is also not hype. It sits in a more useful, and more demanding, place than either of those narratives.

It is genuinely capable of doing work that used to require people: reading documents, drafting correspondence, categorising data, answering questions, triaging requests. Deployed well, it frees your team to focus on the work that actually requires human judgment.

But "deployed well" is doing a lot of work in that sentence. It requires clarity on what you're trying to solve, care about how the AI is set up, and ongoing attention to how it performs.

The founders who get real value from AI are the ones who treat it like a capable new hire, not a light switch.

Next in the series: Your business runs on a coordination tax. Most founders pay it forever.

Stop explaining it to people

who don't get it.

Tell us what you want to build. We'll tell you if we can build it, what it costs, and when it ships — in one 30-minute call. Written build plan in your inbox within 24 hours.